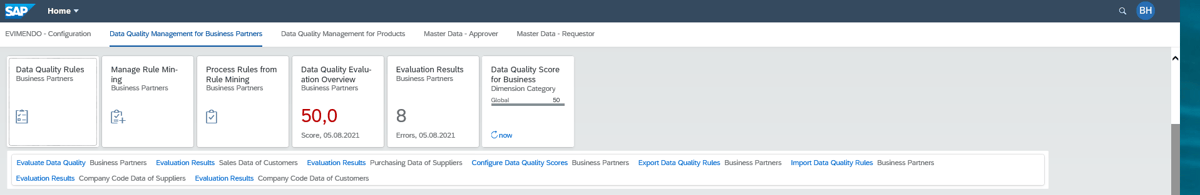

Since release 1809 of SAP S/4HANA, SAP Data Quality Management (DQM) can be activated and used as a component of SAP Master Data Governance (MDG). As of release 1909, it can even be used for product data, customer/vendor data and custom objects. The toolset includes various Fiori apps for determining, displaying and increasing data quality, for example in the material master or the business partner.

Among other things, the Fiori apps offer the option of importing and exporting data quality rules and executing evaluation runs against the dataset in the system. In addition, separate Fiori apps can be used to define dimensions and scores for the respective rules.

Are you looking for a project accelerator for your Data Quality Management?

Our content package EVIMENDO.dqm provides you with a preconfigured SAP DQM via transport. Thanks to the delivered rules, you get to know your data better and then have the option to individually extend

your Data Quality Management.

Rules via rules mining

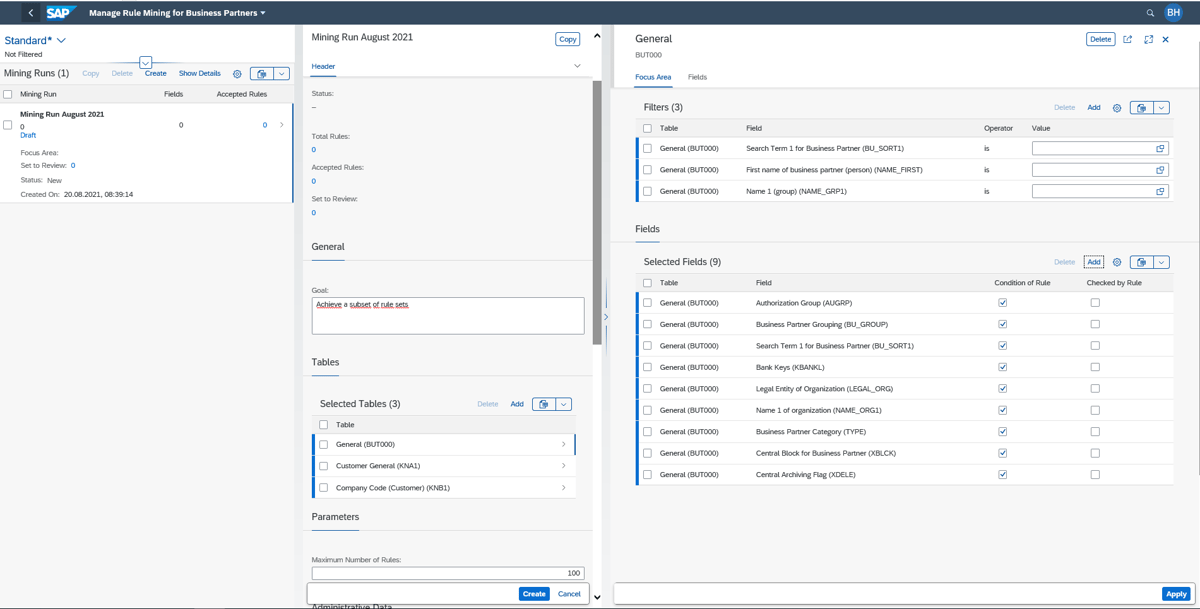

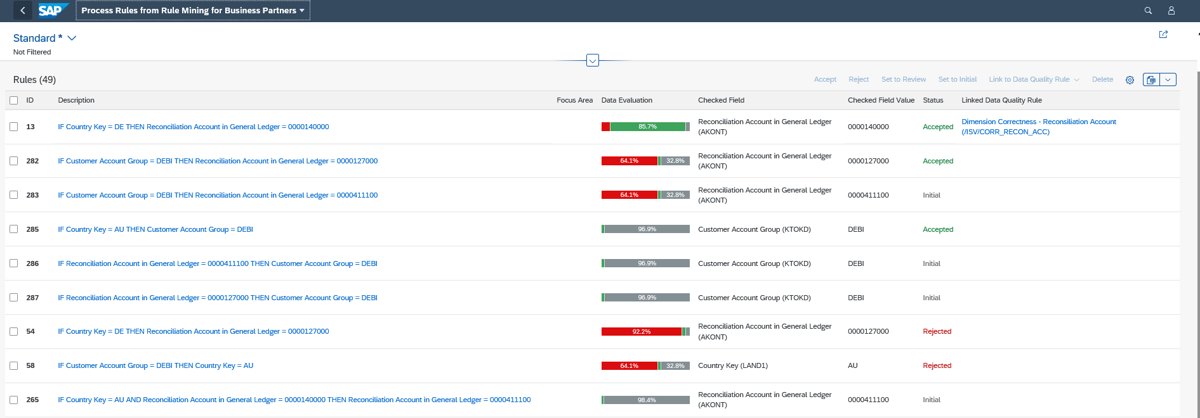

The rules can either be implemented by the user or generated using the rules mining app, which is also available in SAP DQM. We will go into more detail on the implementation of the rules and the associated routing/delegation below.

The rules mining app makes use of various HANA functions and a type of artificial intelligence (AI) to find possible combinations of filled fields and threshold values in the current dataset (material or business partner).

Specifically, the user has the option to select the desired combinations on a table basis, for example MARA or BUT000, in conjunction with other tables such as KNB1/KNA1, and have the AI evaluate them.

Quality dimensions

The generated rules can then be implemented as data quality rules at the click of a button. To categorize the rules, the user can work with dimensions in the app “Define Scores”.

Typically, companies access the following dimensions:

- Accessibility

- Timeliness

- Uniqueness

- Correctness

- Completeness

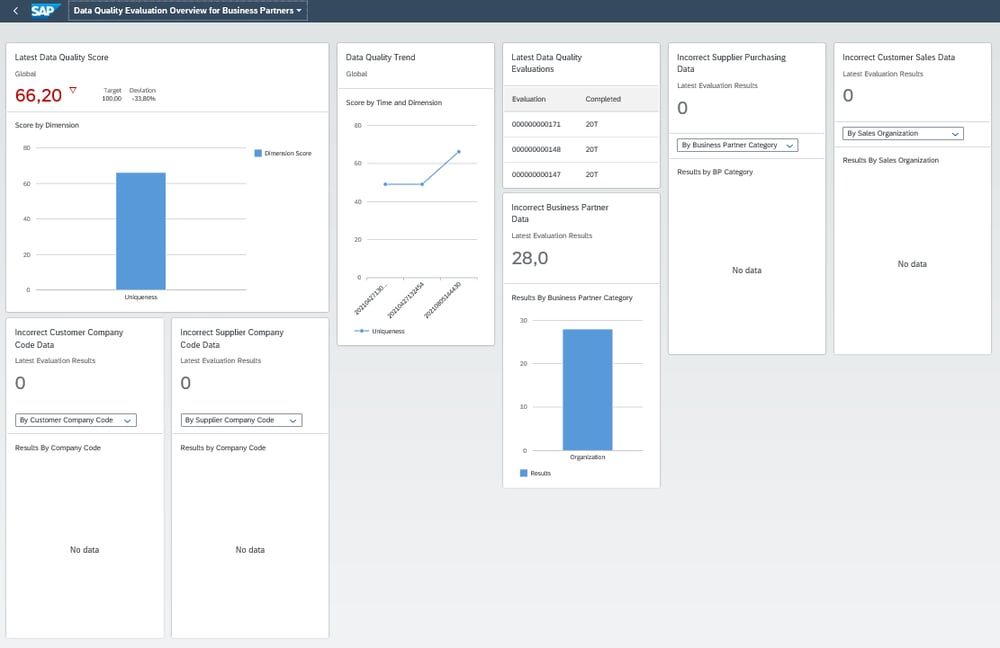

These dimensions can be created and assigned the desired values for Critical, Warning and Target. The already implemented rules can be assigned to the corresponding dimensions. Based on a weighting to be defined, a data quality score is then produced during the evaluation in combination with incorrect data records.

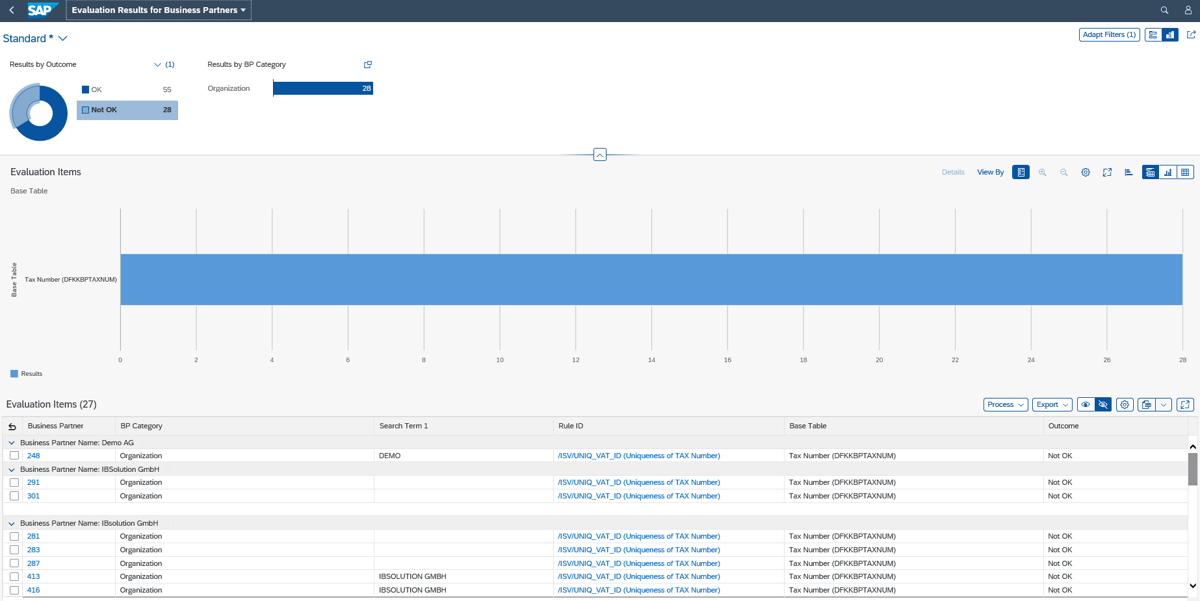

Useful evaluations

The evaluation can also be operated in a separate app, gives the user information about failed data records and provides information about which rules were violated. In addition, the development of data quality is also displayed graphically based on all evaluations that have already been performed.

This allows companies to quickly see whether, for example, a data import has worsened the quality or the defined rules are achieving the desired effectiveness in terms of data quality.

Here it is possible to jump directly to a change request or a mass process. Alternatively, the affected data record can be assigned to the responsible department for correction.

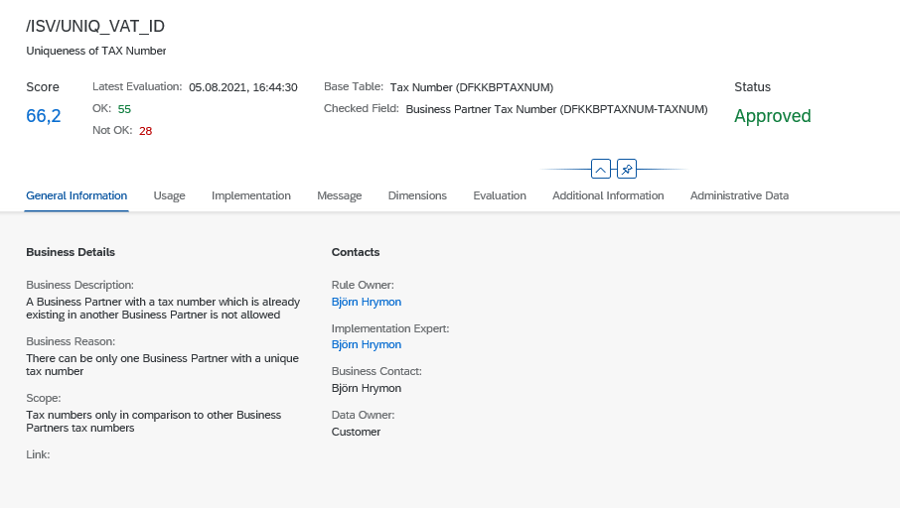

Rule owner, implementation expert, business contact

As mentioned, there is – in analogy to the correction of the data records – also a corresponding routing for the creation and implementation of the data quality rules. This means, for example, that the specialist department can describe and structure a rule thematically and then pass it on to the R&D department for implementation. Behind each rule there is a rule owner, a developer and a contact person. Behind these fields is an authorization logic, which is customized when setting up SAP DQM.

Technical rules: scope and condition

The rules themselves always consist of two technical rules, the scope and the condition. The scope defines which data record is to be selected for the corresponding rule. This results in the 100% value.

An example: A user has 10,000 business partners, which include 2,000 German business partners. If the user implements a rule that only applies to German business partners and selects the scope accordingly, 2,000 is the value for 100%. If 1,000 business partners are then marked as incorrect in the condition, 50% of the data is incorrect.

When creating the rules, it is also possible to specify for which processes the rule should apply. This means that the user can select whether the rule is to be applied exclusively in the evaluation or additionally in an MDG/MDC process or a mass process.

This ensures that data quality is maintained even for future business partners that are created in the system or imported via an MDC process.

![IBacademy_Logo_blau[496] IBacademy_Logo_blau[496]](https://www.ibsolution.com/hs-fs/hubfs/IBacademy_Logo_blau%5B496%5D.jpg?width=200&name=IBacademy_Logo_blau%5B496%5D.jpg)